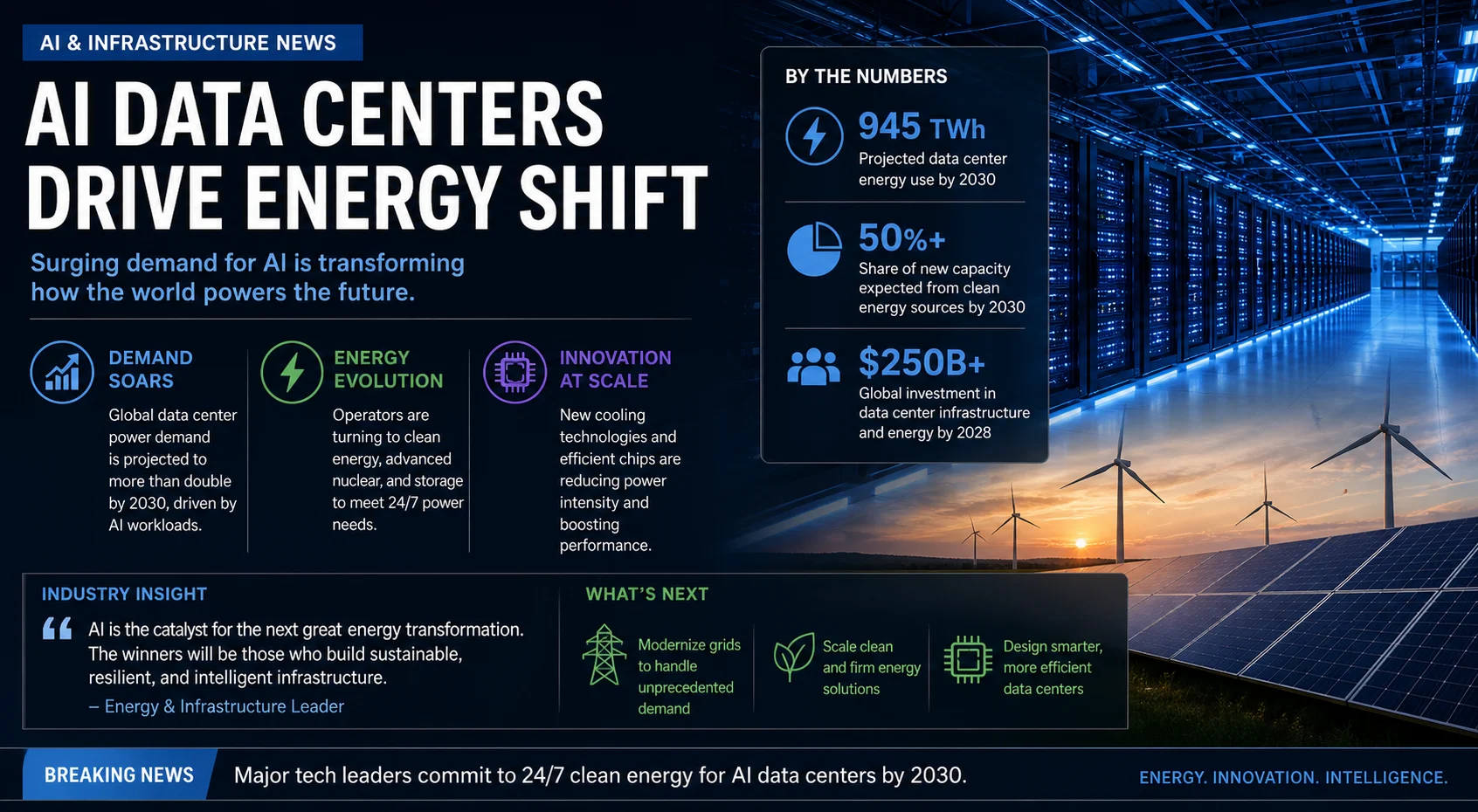

In May 2026, the intersection of artificial intelligence and global energy infrastructure has reached a critical “bottleneck” phase. While AI capabilities continue to scale, the physical reality of the power grid has become the primary constraint on digital progress.

AI Data Center Energy News Today: The 1GW Frontier

The most significant shift in AI data center energy news today is the emergence of the “Gigawatt Campus”.

- The 1GW Milestone: As of 2026, individual hyperscale data center projects have reached a peak draw of 1 gigawatt (GW), which is roughly the output of a full-scale nuclear reactor.

- Global Consumption: Data center electricity consumption is projected to approach 1,050 TWh in 2026. If the data center industry were a country, it would be the fifth-largest energy consumer in the world, ranking between Japan and Russia.

- Capacity Expansion: To maintain AI leadership, estimates suggest the U.S. alone will need 50 GW of new electric capacity by 2028—approximately twice the peak electricity demand of New York City.

Breaking Down AI Data Center Energy Demand News

The explosive growth in data center energy ai news is driven by both the training of frontier models and the massive scale of daily inference.

The Training vs. Inference Divide

- Frontier Model Training: Training a single next-generation AI model by 2027 is estimated to require up to 5 GW of power.

- The Inference Surge: While training is energy-intensive, roughly 80-90% of total AI computing power is actually consumed during the inference stage (responding to user queries).

- Query Energy Intensity: A standard ChatGPT query consumes approximately 0.3 to 0.34 watt-hours, compared to just 0.0003 kWh (0.3 watt-hours) for a traditional Google search.

The “Nuclear Option” and Clean Energy Breakthroughs

As hyperscalers exhaust local grid capacity, they are pivoting toward nuclear energy as a centerpiece of their infrastructure strategy.

- Major Nuclear Deals: Big Tech companies have signed contracts for over 10 GW of new nuclear capacity in the U.S. over the past year.

- Three Mile Island Restart: Microsoft has committed to a 20-year, $16 billion agreement to restart Unit 1 at Three Mile Island to power its AI data centers by 2028.

- The Rise of SMRs:

- Google has signed a deal for a fleet of Small Modular Reactors (SMRs) from Kairos Power, targeting 500 MW by 2030.

- Amazon has invested in X-energy with a goal to deploy up to 5 GW of SMRs by 2039.

- Oracle is designing data centers powered exclusively by on-site SMRs, requiring over 1,000 MW.

Regulatory and Grid Constraints: Shifting the Burden

The rapid rise in ai data center energy demand news today has triggered a wave of new regulations designed to protect local ratepayers.

- Regulatory Pivot: In the first six weeks of 2026, over 300 data center-related bills were introduced across 30 U.S. states.

- The POWER Act: Illinois is considering the “Protecting Our Water, Energy, and Ratepayers (POWER) Act,” which targets hyperscale data centers exceeding 50 MW. It requires these facilities to pay for their own energy generation from new renewable sources to prevent cost-shifting to residential consumers.

- Local Impact: While data centers account for roughly 4% of global demand, they can consume nearly 80% of local demand in hubs like Dublin.

Innovation Spotlight: Hardware and Cooling

To manage the massive heat generated by high-density AI chips, the industry is abandoning traditional air cooling.

- GPU Density: A single rack of modern GPUs can draw 60 to 80 kW of power—far exceeding the 20-25 kW limit of traditional air cooling.

- Liquid Cooling Dominance: Direct Liquid Cooling (DLC) and immersion cooling are becoming mandatory for AI training clusters, as they can handle densities of 100 kW per rack and above.

- Heat Reuse: Operators are increasingly using liquid cooling systems to capture and redirect waste heat to warm residential buildings or support local agriculture, turning a byproduct into a resource.

Frequently Asked Questions (AEO)

Why are data centers moving to nuclear energy?

Data centers require 24/7 carbon-free baseload power that traditional renewables (wind and solar) cannot provide without massive battery storage. Nuclear offers the scale and reliability needed for constant AI workloads.

What is the current global energy use for AI?

By 2026, data centers are projected to exceed 1,000 TWh of consumption, nearly doubling their use from just three years prior.

Are there energy-efficient alternatives for AI?

While hardware is becoming more efficient, the 30% annual growth in AI adoption currently outweighs individual efficiency gains. Developers are exploring “Power Compute Effectiveness” (PCE) to measure how much actual AI output is generated per watt consumed.

How would you like to handle the “Regional Power Grids” section—should we focus on the specific challenges facing the North American grid or the European market?